16 Support Vector Machines

This page is a work in progress and major changes will be made over time.

Support vector machines are a popular class of model in regression and classification settings due to their ability to make accurate predictions for complex high-dimensional, non-linear data. In survival analysis, survival support vector machines (SSVMs), are one of the few classes that can estimate survival times. However, experiments with SSVMs have demonstrated that they are best used for ranking predictions as their survival time predictions can be wildly out of range. As a consequence, SSVMs cannot easily be used to make distribution predictions. As usual this chapter starts with SVMs in the regression setting before moving to adaptions for survival analysis.

16.1 SVMs for Regression

In simple linear regression, the aim is to estimate the line \(y = b + x\beta_1\) by estimating the \(b,\beta_1\) coefficients. As the number of coefficients increases, the goal becomes to instead estimate the hyperplane that divides the higher-dimensional space into two separate parts. To visualize a hyperplane, imagine looking at a room from a birds eye view that has a dividing wall cutting the room into two halves (Figure 16.1). In this view, the room appears to have dimensions (x=left-right, y=top-bottom) and the divider is a simple line of the form \(y = b + x\beta_1\). In reality, this room is actually three dimensional and has a third dimension (z=up-down) and the divider occupies these dimensions as a hyperplane of the form \(y = b + x\beta_1 + z\beta_2\).

Continuing the linear regression example, consider a simple linear regression model where the objective is to find the \(\boldsymbol{\beta}\) coefficients that minimize \(\sum^n_{i=1} (g(x) - y)^2\) where \(y = b + x\beta_1\). In a higher-dimensional space, a penalty term can be added for variable selection to reduce model complexity, commonly of the form

\[ \frac{1}{2} \sum^n_{i=1} (g(x) - y)^2 + \frac{\lambda}{2} \|\boldsymbol{\beta}\|^2 \]

for some penalty term \(\lambda\). Minimizing this error function effectively minimizes the average difference between all predictions and true outcomes, resulting in a hyperplane that represents the best linear relationship between coefficients and outcomes.

Similarly to linear regression, support vector machines (SVMs) (Cortes and Vapnik 1995) also fit a hyperplane, \(g\), on given training data, \(\mathbf{X}\). However, in SVMs, the goal is to fit a flexible (non-linear) hyperplane that minimizes the difference between predictions and the truth for individual observations. A core feature of SVMs is that one does not try to fit a hyperplane that makes perfect predictions as this would overfit the training data and is unlikely to perform well on unseen data. Instead, SVMs use a regularized error function, which allows incorrect predictions (errors) for some observations, with the magnitude of error controlled by an \(\epsilon>0\) parameter as well as slack parameters, \(\xi, \xi^*\):

\[ \begin{aligned} & \min_{\boldsymbol{\beta},b, \xi, \xi^*} \frac{1}{2} \|\boldsymbol{\beta}\|^2 + \gamma \sum^n_{i=1}(\xi_i + \xi_i^*) \\ & \textrm{subject to} \begin{dcases} g(\mathbf{x}_i) & \geq y_i -\epsilon - \xi_i \\ g(\mathbf{x}_i) & \leq y_i + \epsilon + \xi_i^* \\ \xi_i, \xi_i^* & \geq 0 \\ \forall i\in 1,...,n \end{dcases} \end{aligned} \tag{16.1}\]

where \(g(\mathbf{x}_i) = \boldsymbol{\beta}^T\mathbf{x}_i + b\) for model weights \(\boldsymbol{\beta},b \in \mathbb{R}\) and the usual training data \((\mathbf{X}, \mathbf{t}, \boldsymbol{\delta})\).

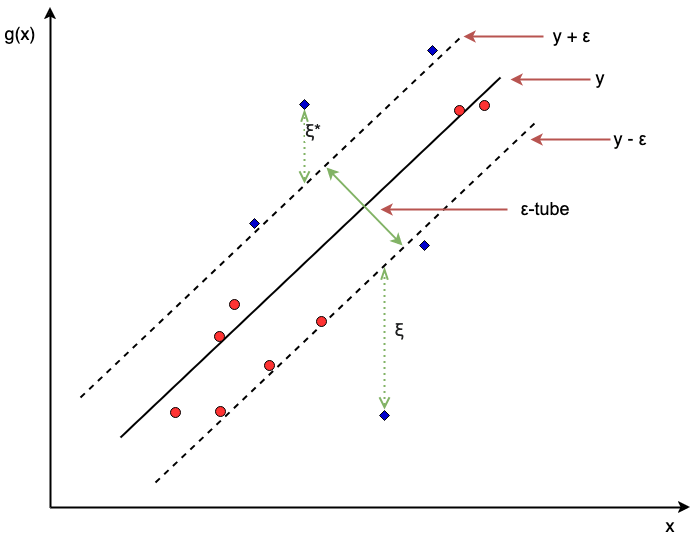

Figure 16.3 visualizes a support vector regression model in two dimensions. The red circles are values within the \(\epsilon\)-tube and are thus considered to have a negligible error. In fact, the red circles do not affect the fitting of the optimal line \(g\) and as long as they remain within the tube, the shape of \(g\) would not change. In contrast the blue diamonds have an unacceptable margin of error - as an example the top blue diamond will have \(\xi_i = 0\) but \(\xi_i^* > 0\), thus influencing the estimation of \(g\). Points on or outside the epsilon tube are referred to as support vectors as they affect the construction of the hyperplane. The \(\gamma \in \mathbb{R}_{>0}\) hyperparameter controls the slack parameters and thus as \(\gamma\) increases, the number of errors (and subsequently support vectors) is allowed to increase resulting in low variance but higher bias, in contrast a lower \(\gamma\) is more likely to introduce overfitting with low bias but high variance (Hastie, Tibshirani, and Friedman 2001). \(\gamma\) should be tuned to control this trade-off.

The other core feature of SVMs is exploiting the kernel trick, which uses functions known as kernels to allow the model to learn a non-linear hyperplane whilst keeping the computations limited to lower-dimensional settings. Once the model coefficients have been estimated using the optimization above, predictions for a new observation \(\mathbf{x}^*\) can be made using a function of the form

\[ \hat{g}(\mathbf{x}^*) = \sum^n_{i=1} \alpha_iK(\mathbf{x}^*,\mathbf{x}_i) + b \tag{16.2}\]

Details (including estimation) of the \(\alpha_i\) Lagrange multipliers are beyond the scope of this book, references are given at the end of this chapter for the interested reader. \(K\) is a kernel function, with common functions including the linear kernel, \(K(x^*,x_i) = \sum^p_{j=1} x_{ij}x^*_j\), radial kernel, \(K(x^*,x_i) = \exp(-\omega\sum^p_{j=1} (x_{ij} - x^*_j)^2)\) for some \(\omega \in \mathbb{R}_{>0}\), and polynomial kernel, \(K(x^*,x_i) = (1 + \sum^p_{j=1} x_{ij}x^*_j)^d\) for some \(d \in \mathbb{N}_{> 0}\).

The choice of kernel and its parameters, the regularization parameter \(\gamma\), and the acceptable error \(\epsilon\), are all tunable hyper-parameters, which makes the support vector machine a highly adaptable and often well-performing machine learning method. The parameters \(\gamma\) and \(\epsilon\) often have no clear apriori meaning (especially true in the survival setting predicting abstract rankings) and thus require tuning over a great range of values; no tuning usually results in a poor model fit (Probst, Boulesteix, and Bischl 2019).

16.2 SVMs for Survival Analysis

Extending SVMs to the survival domain (SSVMs) is a case of: i) identifying the quantity to predict; and ii) updating the optimization problem (Equation 16.1) and prediction function (Equation 16.2) to accommodate for censoring. In the first case, SSVMs can be used to either make survival time or ranking predictions, which are discussed in turn. The notation above is reused below for SSVMs, with additional notation introduced when required.

16.2.1 Survival time SSVMs

To begin, consider the objective for support vector regression with the \(y\) variable replaced with the usual survival time outcome \(t\):

\[ \begin{aligned} & \min_{\boldsymbol{\beta}, b, \xi, \xi^*} \frac{1}{2} \|\boldsymbol{\beta}\|^2 + \gamma \sum^n_{i=1}(\xi_i + \xi_i^*) \\ & \textrm{subject to} \begin{dcases} g(\mathbf{x}_i) & \geq t_i -\epsilon - \xi_i \\ g(\mathbf{x}_i) & \leq t_i + \epsilon + \xi_i^* \\ \xi_i, \xi_i^* & \geq 0 \\ \forall i\in 1,...,n \end{dcases} \end{aligned} \]

In survival analysis, this translates to fitting a hyperplane in order to predict the true survival time. However, as with all adaptations from regression to survival analysis, there needs to be a method for incorporating censoring.

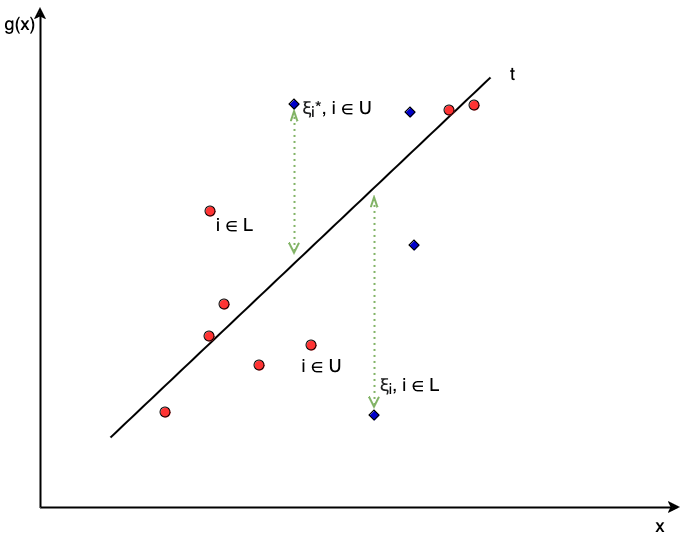

Recall the \((t_l, t_u)\) notation to describe censoring as introduced in Chapter 4 where an observation is left-censored if the survival time is known to be less than some \(t \in \mathbb{R}_{>0}\), hence \((t_l, t_u) = (-\infty, t)\), right-censored if \((t_l, t_u) = (t, \infty)\), or uncensored if \((t_l, t_u) = (t, t)\). Define \(L\) as the set of observations with a finite lower-bound time, which are those who are right-censored or uncensored, and define \(U\) as the analogous set of observations with a finite upper-bounded time.

Now consider these bounds in the context of the constraints above. The first constraint ensures the hyperplane is greater than some lower-bound created by subtracting the slack parameter from the true outcome – given the set definitions above this constraint only has meaning for observations with a finite lower-bound, \(i \in L\), otherwise \(g(\mathbf{x}_i \geq -\infty\)), which is not a particularly useful constraint. Similarly the second constraint ensures the hyperplane is less than some upper-bound, which again can only be meaningful for observations \(i \in R\). Restricting the constraints in this way leads to the optimization problem (Shivaswamy, Chu, and Jansche 2007):

\[ \begin{aligned} & \min_{\boldsymbol{\beta}, b, \xi, \xi^*} \frac{1}{2}\|\boldsymbol{\beta}\|^2 + \gamma\Big(\sum_{i \in R} \xi_i + \sum_{i \in L} \xi_i^*\Big) \\ & \textrm{subject to} \begin{dcases} g(\mathbf{x}_i) & \geq t_i -\xi_i, i \in L \\ g(\mathbf{x}_i) & \leq t_i + \xi^*_i, i \in U \\ \xi_i \geq 0, i\in L; \xi^*_i \geq 0, i \in U \end{dcases} \end{aligned} \]

If no one is censored then the optimisation is identical to the regression optimisation in (Equation 16.1). Note that in SSVMs, the \(\epsilon\) parameters are typically removed to better accommodate censoring and to help prevent the same penalization of over- and under-predictions. In contrast, one could introduce more \(\epsilon\) and \(\gamma\) parameters to separate between under- and over-predictions and to separate right- and left-censoring, however this leads to eight tunable hyperparameters, which is inefficient and may actually increase overfitting (Fouodo et al. 2018; Land et al. 2011). The algorithm can be simplified to right-censoring only by removing the second constraint completely for anyone censored:

\[ \begin{aligned} & \min_{\boldsymbol{\beta}, b, \xi, \xi^*} \frac{1}{2}\|\boldsymbol{\beta}\|^2 + \gamma \sum_{i = 1}^n (\xi_i + \xi_i^*) \\ & \textrm{subject to} \begin{dcases} g(\mathbf{x}_i) & \geq t_i - \xi^*_i \\ \delta_i g(\mathbf{x}_i) & \leq \delta_i t_i + \xi_i \\ \xi_i, \xi_i^* & \geq 0 \\ \forall i\in 1,...,n \end{dcases} \end{aligned} \]

With the prediction for a new observation \(\mathbf{x}^*\) calculated as,

\[ \hat{g}(\mathbf{x}^*) = \sum^n_{i=1} \alpha^*_i K(\mathbf{x}_i, \mathbf{x}^*) - \delta_i\alpha_i'K(\mathbf{x}_i, \mathbf{x}^*) + b \]

Where again \(K\) is a kernel function and the calculation of the Lagrange multipliers is beyond the scope of this book.

16.2.2 Ranking SSVMs

As discussed in Chapter 11, survival time predictions are actually quite rare in survival analysis and more often than not survival times are used to infer rankings between observations. However, when ranking is the primary goal, SSVMs can be more effectively trained by updating the optimization function to penalize incorrect rankings as opposed to incorrect survival times.

A natural method to optimize with respect to ranking is to penalize predictions that result in disconcordant predictions. Recall the definition of concordance from Chapter 8: ranking predictions for a pair of comparable observations \((i, j)\) where \(t_i < t_j \cap \delta_i = 1\), is called concordant if \(r_i > r_j\). Using the prognostic index as a ranking prediction (Section 6.2), a pair of observations is concordant if \(g(\mathbf{x}_i) > g(\mathbf{x}_j)\) when \(t_i < t_j\), leading to:

\[ \begin{aligned} & \min_{\boldsymbol{\beta}, b, \xi} \frac{1}{2}\|\boldsymbol{\beta}\|^2 + \gamma\sum_{i =1}^n \xi_i \\ & \textrm{subject to} \begin{dcases} g(\mathbf{x}_i) - g(\mathbf{x}_j) & \geq \xi_i, \forall i,j \in CP \\ \xi_i & \geq 0, i = 1,...,n \\ \end{dcases} \end{aligned} \]

where \(CP\) is the set of comparable pairs defined by \(CP = \{(i, j) : t_i < t_j \wedge \delta_i = 1\}\). Given the number of pairs, the optimization problem quickly becomes difficult to solve with a very long runtime. To solve this problem Van Belle et al. (2011) found an efficient reduction that sorts observations in order of outcome time and then compares each data point \(i\) with the observation with the next smallest survival time, skipping over censored observations. For example, consider the observed outcomes \(\{(1, 1), (4, 0), (2, 1), (8, 0)\}\), sorting by outcome time gives \(\{(1, 1), (2, 0), (4, 1), (8, 0)\}\). The comparable pairs are then \((i=2, j=1)\), \((i=3,j=1)\), \((i=4,j=3)\) where the censored second outcome is skipped over when finding a pair for observation \(3\). In this algorithm, the first observation is always assumed to be uncensored even if it was censored in reality. Using this reduction, the algorithm becomes

\[ \begin{aligned} & \min_{\boldsymbol{\beta}, b, \xi} \frac{1}{2}\|\boldsymbol{\beta}\|^2 + \gamma\sum_{i =1}^n \xi_i \\ & \textrm{subject to} \begin{dcases} g(\mathbf{x}_i) - g(\mathbf{x}_{j(i)}) & \geq t_i - t_{j(i)} - \xi_i \\ \xi_i & \geq 0 \\ \forall i = 1,...,n \end{dcases} \end{aligned} \]

where \(j(i)\) is the observation with the next smallest survival time compared to \(i\). Note the updated right hand side of the constraint, which plays a similar role to the \(\epsilon\) parameter by allowing ‘mistakes’ in predictions without penalty.

Prediction for a new observation \(\mathbf{x}^*\) are calculated as,

\[ \hat{g}(\mathbf{x}^*) = \sum^n_{i=1} \alpha_i(K(\mathbf{x}_i, \mathbf{x}^*) - K(\mathbf{x}_{j(i)}, \mathbf{x}^*)) + b \]

Where \(\alpha_i\) are again Lagrange multipliers.

16.2.3 Hybrid SSVMs

Finally, Van Belle et al. (2011) noted that the ranking algorithm could be updated to add the constraints of the regression model, thus providing a model that simultaneously optimizes for ranking whilst providing continuous values that can be interpreted as survival time predictions. This results in the hybrid SSVM with constraints:

\[ \begin{aligned} & \min_{\boldsymbol{\beta}, b, \xi, \xi', \xi^*} \frac{1}{2}\|\boldsymbol{\beta}\|^2 + \textcolor{CornflowerBlue}{\gamma\sum_{i =1}^n \xi_i} + \textcolor{Rhodamine}{\mu \sum^n_{i=1}(\xi_i' + \xi_i^*)} \\ & \textrm{subject to} \begin{dcases} \textcolor{CornflowerBlue}{g(\mathbf{x}_i) - g(\mathbf{x}_{j(i)})} & \textcolor{CornflowerBlue}{\geq t_i - t_{j(i)} + \xi_i} \\ \textcolor{Rhodamine}{\delta_i g(\mathbf{x}_i)} & \textcolor{Rhodamine}{\leq \delta_i t_i + \xi^*_i} \\ \textcolor{Rhodamine}{g(\mathbf{x}_i)} & \textcolor{Rhodamine}{\geq t_i - \xi'_i} \\ \textcolor{CornflowerBlue}{\xi_i}, \textcolor{Rhodamine}{\xi_i', \xi_i^*} & \geq 0 \\ \forall i = 1,...,n \\ \end{dcases} \end{aligned} \label{eq:surv_ssvmvb2} \]

The blue parts of the equation make up the ranking model and the red parts are the regression model. \(\gamma\) is the penalty associated with the regression method and \(\mu\) is the penalty associated with the ranking method setting \(\gamma = 0\) results in the regression SVM and \(\mu = 0\) results in the ranking SSVM. Hence, fitting the hybrid model and tuning these parameters is an efficient way to automatically detect which SSVM is best suited to a given task.

Once the model is fit, a prediction from given features \(\mathbf{x}^* \in \mathbb{R}^p\), can be made using the equation below, again with the ranking and regression contributions highlighted in blue and red respectively.

\[ \hat{g}(\mathbf{x}^*) = \sum^n_{i=1} \textcolor{CornflowerBlue}{\alpha_i(K(\mathbf{x}_i, \mathbf{x}^*) - K(\mathbf{x}_{j(i)}, \mathbf{x}^*))} + \textcolor{Rhodamine}{\alpha^*_i K(\mathbf{x}_i, \mathbf{x}^*) - \Delta_i\alpha_i'K(\mathbf{x}_i, \mathbf{x}^*)} + b \]

where \(\alpha_i, \alpha_i^*, \alpha_i'\) are Lagrange multipliers and \(K\) is a chosen kernel function, which may have further hyper-parameters to select or tune.